Hypothesis test calculator online accept or reject

Alternatively, Beta is the probability of committing a Type II error in the long run. In this context, alpha is the probability of committing a Type I error in the long run. This dichotomization allows distinguishing correct results (rejecting H0 when there is an effect and not rejecting H0 when there is no effect) from errors (rejecting H0 when there is no effect, the type I error, and not rejecting H0 when there is an effect, the type II error). Neyman & Pearson (1928) introduced the notion of critical intervals, therefore dichotomizing the space of possible observations into correct vs. This differs markedly from Fisher who proposed a general approach for scientific inference conditioned on the null hypothesis only. The first key concept in this approach, is the establishment of anĪlternative hypothesis along with an a priori effect size. In such framework, two hypotheses are proposed: the null hypothesis of no effect and the alternative hypothesis of an effect, along with a control of the long run probabilities of making errors.

Neyman & Pearson (1933) proposed a framework of statistical inference for applied decision making and quality control. Neyman-Pearson, hypothesis testing, and the α-value This common misconception arises from a confusion between the probability of an observation given the null p(Obs≥t|H0) and the probability of the null given an observation p(H0|Obs≥t) that is then taken as an indication for p(H0) (see Is not the probability of the null hypothesis p(H0), of being true, ( Some authors have even argued that the more (a priori) implausible the alternative hypothesis, the greater the chance that a finding is a false alarm ( Because a low p-value only indicates a misfit of the null hypothesis to the data, it cannot be taken as evidence in favour of a specific alternative hypothesis more than any other possible alternatives such as measurement error and selection bias ( Is not an indication favouring a given hypothesis. In low powered studies (typically small number of subjects), the p-value has a large variance across repeated samples, making it unreliable to estimate replication ( Because the p-value depends on the number of subjects, it can only be used in high powered studies to interpret results. Often, a small value of p is considered to mean a strong likelihood of getting the same results on another try, but again this cannot be obtained because the p-value is not informative on the effect itself (

/HypothesisTestinginFinance1_2-1030333b070c450c964e82c33c937878.png)

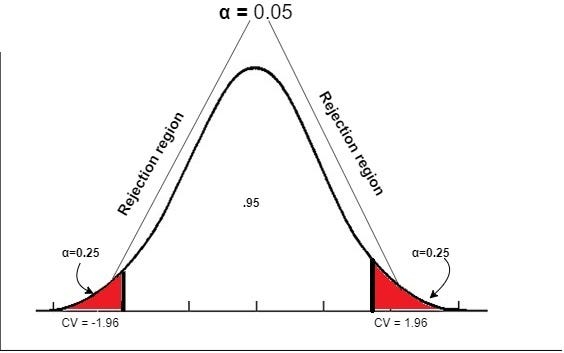

Is not the probability to replicate an effect. In addition, while p-values are randomly distributed (if all the assumptions of the test are met) when there is no effect, their distribution depends of both the population effect size and the number of participants, making impossible to infer strength of effect from them. Any interpretation of the p-value in relation to the effect under study (strength, reliability, probability) is wrong, since p-values are conditioned on H0. Is not an indication of the strength or magnitude of an effect. How small the level of significance is, is thus left to researchers. A key aspect of Fishers’ theory is that only the null-hypothesis is tested, and therefore p-values are meant to be used in a graded manner to decide whether the evidence is worth additional investigation and/or replication (įisher, 1971 page 13: ‘it is open to the experimenter to be more or less exacting in respect of the smallness of the probability he would require ’ and ‘no isolated experiment, however significant in itself, can suffice for the experimental demonstration of any natural phenomenon’). Fisher recommended using p=0.05 to judge whether an effect is significant or not as it is roughly two standard deviations away from the mean for the normal distribution (įisher, 1934 page 45: ‘The value for which p=.05, or 1 in 20, is 1.96 or nearly 2 it is convenient to take this point as a limit in judging whether a deviation is to be considered significant or not’). Level of significance (a theoretical p-value) that acts as a reference point to identify significant results, that is to identify results that differ from the null-hypothesis of no effect.

The approach proposed is of ‘proof by contradiction’ (Ĭhristensen, 2005), we pose the null model and test if data conform to it. It is equal to the area under the null probability distribution curve from the observed test statistic to the tail of the null distribution (Įt al., 2004). This probability or p-value reflects (1) the conditional probability of achieving the observed outcome or larger: p(Obs≥t|H0), and (2) is therefore a cumulative probability rather than a point estimate. t value), assuming the null hypothesis of no effect is true.

Fisher, significance testing, and the p-valueįisher, 1959) allows to compute the probability of observing a result at least as extreme as a test statistic (e.g.